Latest Item

Latest Blog articles from various categories

Driver Signatures from Car Diagnostic Data captured using a Raspberry Pi: Part 3 (Building a data model and predicting driver)

In the first and second part of this series I described how to set up the hardware to read data from the OBD port using a Raspberry Pi and then upload it to the cloud. You should read the first part and second part of this series before you read this article.

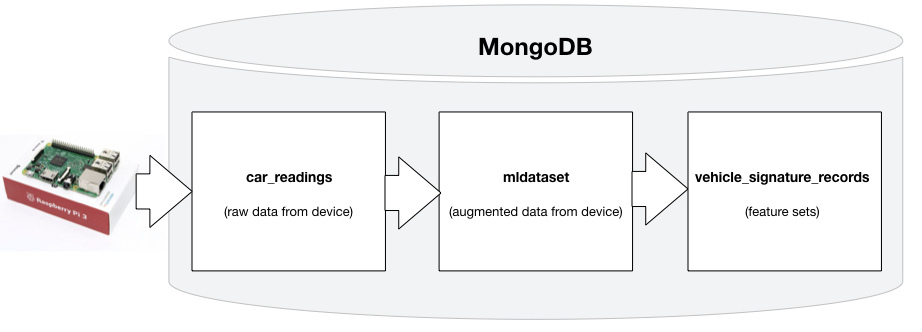

Having done all the work to capture and transport all the data to the cloud, let us figure out what can be done on the cloud to introduce Machine Learning. To understand the concepts given in this article you will need to be familiar with Javascript and Python. Also, I am using MongoDB as my database - so you will need to know the basics of a document-oriented database to follow the example code here. MongoDB is not only my storage engine here, but also my compute engine. By that, I mean that I am using the database's scripting language (Javascript) to pre-process data that will ultimately be used for machine learning. The Javascript code given herein executes (in a distributed manner) inside the MongoDB database. (Some people get confused when they see Javascript, assuming that it requires a server like NodeJS to run - not here.)

Introduction to the Solution

To set the introduction, I will describe the following three tasks in this article:

- Read the raw records and augment it with additional derived information to generate some extra features used for machine learning that is not directly sent by the Raspberry Pi. Derivatives based on time interval between readings can be used to derive instanteous velocity, angular velocity and incline. These are inserted into the derived records when we save it to another MongoDB collection (also known as 'table' in relational database parlance) to be used later for generating the feature sets. I will be calling this collection 'mldataset' in my database.

- Read the 'mldataset' and extract features from the records. The feature set is saved into another collection called 'vehicle_signature_records'. This is an involved process since there are so many fields found in the raw records. In my case, the feature sets are basically three statistical averages (minimum, average and maximum) of all values aggregated over a 15 second period. The other research papers on this subject take the same approach, but the time interval over which the aggregates are taken vary based on the frequency of the readings. The recommended frequency is 5 Hz i.e. 1 record-set per 0.2 second. But as I mentioned in article 2 of this series, we are unable to read data that fast on a serial connection over ELM 327. The maximum speed that I have been able to observe (mostly in modern cars) is 1 record-set in 0.7 seconds. Thus a 15 second aggregation makes more sense in our scenario. Due to this, the accuracy of the prediction may be affected - but we will accept that as a constraint. The solution methodology remains the same though.

- Apply a learning algorithm on the feature-set to learn the driver behavior. Then the model needs to be deployed on a machine in the cloud. In real-time we need to calculate the same aggregates over the same time interval (15 seconds) and feed it into the model to come up with a prediction. To confirm the driver we will need to take readings over several intervals (5 minutes will give 20 predictions) and then use the value with the maximum count (i.e. modal value).

Augmenting raw data with derivatives

This is a very common scenario in IoT applications. When generated data comes from a human being, it always has useful information at the surface. All you need to do is scan the logs and extract it. An example of this is finding the interests of the user based on user-clicks in a shopping cart scenario - all the items that the user has seen on the web-site are directly found in the logs. However an IoT device is dumb, has no emotion, has no special interests. All data coming from an IoT device is the same consistent boring stream. So where do you dig to find useful information? The answer to this question is in the time-derivatives. The variation in values from one reading and the other provides useful insight into the behavior. Examples of these are velocity (derivative of displacement found from GPS readings), acceleration (derivative of velocity) and jerk (derivative of acceleration). So you see, augmenting raw data to put this extra information is extremely useful for IoT applications.

In this step I am going to write some Javascript code (that runs inside the MongoDB database) to augment raw data with derivatives for each record. You will find all this code in the file 'extract_driver_features_from_car_readings.js' which is located inside the 'machinelearning' folder. If you are wondering where to find the code, it is in Github at this location https://github.com/anupambagchi/driver-signature-raspberry-pi.

Processing raw records

Before diving into the code, let me clarify a few things. The code is written to run as a cronjob on a machine on the same network as the MongoDB database - so that it is accessible. Since it runs as a cron task, we need to know how many records to process from the raw data table. Thus we need to do some book-keeping on the database. We have a special collection called 'book_keeping' for this purpose where we store some book-keeping information. One of them is the last date till when we have processed the records. The data (in JSON format) may look like this:

To determine where we need to pick up the record processing from, here is one way to do this in a MongoDB script written in Javascript.

The 'else' part of the logic above is for the initialization phase when we run it for the first time - we just want to pick up all records for the past year.

Keeping track of driver vehicle combination

Another book-keeping task is to keep track of the driver-vehicle combinations. To make the job easier for the machine learning algorithm, this should be converted to indices. Those indices are maintained in the database in another book-keeping collection called 'driver_vehicles'. This collection looks somewhat like this:

These are the names of vehicles with their drivers. Each combination has been assigned a number against it. When a driver-vehicle combination is encountered, the program looks to see if that combination already exists or not. If not, then it adds a new combination. Here is the code to do it.

You can see that a 'find' call to the MongoDb database is being made to read the hash-table in memory.

The next task is to query the raw collection to find out which records are new since the last time it ran.

NOTE: In the code segments below the dollar symbol will show up as '@'. Please make the appropriate substitutions when you read it. The correct code may be found in the github repository.

SQL vs. Document-oriented database

The next part is the crux of the actual process happening in this script. To understand how this works you need to be familiar with the MongoDB aggregation framework. A task like this will take way too much code if you start writing SQL. Most relational databases and also Spark offer SQL as a way to process and aggregate their data. The most common reason I have heard from managers to take that approach is - "it is easy". That works, but it is too verbose. That is why I personally prefer to use the aggregation framework of MongoDB to do my pre-processing since I can operate much faster than the other tools out there. It may not be "easy" as per the common belief, but a bit more effort in studying the aggregation framework pays off - saving a lot of development effort.

What about execution time? These scripts execute on the database nodes - inside the database. Thus you cannot make it any faster - since most of the time spent in dealing with large data is in transporting the data from the storage nodes to the execution nodes. In the case of the aggregation frameworks, you are getting all benefits of BigData for free. You are actually using in-database analytics here for the fastest execution time.

This nifty script above does a lot of things. The first thing to note in this script is that we are operating on a live database that has a constant stream of data coming in. Thus in order to select some records for processing we need to decide the time range first and only select those that fall within that time range. The next time we run this script, the records that could not be picked up this time, will be gathered and processed. This is all being done within the 'match' clause.

The second clause is the 'project' clause - which only selects the four required fields for the next stage of the pipeline. The 'unwind' clause flattens all arrays. The next 'match' clause select the driver name and the final 'sort' clause sorts the data by eventtime in ascending order.

Distance on earth between two points

Before proceeding further, I would like to get one thing out of the way. Since we are dealing with a lot of latitude-longitude pairs and subsequently trying to find displacement, velocity and acceleration, we need a way to calculate the distance between two points on earth. There are several algorithms with varying degree of accuracy, but this is the one I have found to be computationally accurate (if you do not have an algorithm already provided by the database vendor).

We will be using this function in the next analysis. As I said before, our goal is to augment our device records with extra information pertaining to time-derivatives. The following code adds extra fields "interval", "acceleration", "angular_velocity" and "incline" to each device record by comparing it with the preceeding record.

Note that in line 50, I am saving the record in another collection called 'mldataset' which is going to be the collection on which I will apply feature-extraction for driver signatures. The final task is to save the book-keeping values in their respective tables.

Creating the feature set for driver signatures

The next step is to create the feature sets for driver signature analysis. I do this by first reading records from the augmented collection 'mldataset' and aggregating values over every 15 minutes. For each field that contains a number (and it happens to change often), I will calculate three statistical values for each field - the minimum over the time window, the maximum and the average. Interestingly, one can also include other statistical values like variance, kertosis - but I have not tried those in my experiment yet - and is an enhancement that you can do easily.

You will find all the code in the file 'extract_features_from_mldataset.js' under the 'machinelearning' directory.

Let us do some book-keeping first.

Using the last time stamp stored in the system, we can figure out which records are new.

Extracting features for each driver

Now is the time to do the actual feature extraction from the data-set. Here is the entire loop:

For each driver (or rather driver-vehicle combination) that is identified, the first task is to figure out the last processing time for that driver and find all new records (lines 6 to 22). The next task of aggregating over 15 second windows is a MongoDB aggregation step starting from line 27. Aggregation tasks in MongoDB are described as pipeline where element element of the flow does a certain task and passes on the result to the next element in the pipe. The first task is to match all records within the time-span that we want to process (lines 29 to 36). Then we only need to consider (i.e. project) few fields that are of interest to us (lines 38 to 44). The element of the pipeline '\(group') does the actual job of aggregation. The key to this aggregation step is the group-by Id that is created using a 'quarter' (line 55) which is nothing but a number between 0 and 3 created out of the second value of the time-stamp. This effectively creates the time windows needed for aggregation.

The actual aggregation steps are quite repetitive. See for example lines 61 to 63 where the average load, minimum load and maximum load is being calculated based on the aggregate over each time period. This is repeated for all the variables that we want to consider in the feature-set. Before storing it, the values are sorted based on event-time (lines 200 to 202).

Saving the feature-set in a collection

The features thus calculated are saved to a new collection on which I would apply a machine-learning algorithm to create a model. The collection is called 'vehicle_signature_records' - where the feature-set records can be saved as follows:

The code above inserts a few more variables to identify the driver, the vehicle and the driver-vehicle combination to the result sent by the aggregation function (lines 8 to 21) and saves it to the database (line 26). However lines 23 and 24 need an explanation since it signifies something very important and significant!

Coding the approximate location of the driver

One of the interesting observations I discovered while working on this problem is that one can dramatically improve accuracy of prediction if you can code the approximate location of the driver. Imagine working on this problem for millions of drivers who are scattered all across the country. One of the important facts to consider is that most drivers generally drive around a certain location most of the time. Thus if their location is somehow encoded into the model, the model can quickly converge based on their location. Lines 23 and 24 attempt to do just that. It encodes two numbers that represent the approximate latitude and longitude of the location. All these lines do is store the latitude and longitude with reduced accuracy.

Some more book-keeping

As a final step the final task is to store the book-keeping values.

After doing all this work (which by now you may be already exhausted after reading through), we are finally ready to apply some real machine-learning algorithms. Remember, I said before that 95% of the task of a data scientist is in preparing, collecting, consolidating and cleaning the data. You are seeing a live example of that!

In big companies there are people called data-engineers who would do part of this job, but not all people are fortunate enough to have data-engineers working for them. Besides, if you can do all this work, you are more indispensible to the company you work for - and so it makes sense to develop these skills along with your analysis skills as a data-scientist.

Building a Machine Learning Model

Fortunately, the data has been created in a clean way, so there is no further clean-up required on it. Our data is in a MongoDB collection called 'vehicle_signature_records'. If you are a pure Data Scientist the following should be very familar to you. The only difference between what I am going to do now and what you generally find in books and blogs, is the data-source. I am going to read my data-sets directly from the MongoDB database instead of from CSV files. After reading the above, by now you must have become partial experts at understanding MongoDB document structures. If not, don't worry since all the data that we stored in the collection are all flat - i.e. all values are present at the top level of each record. To illlustrate how the data looks, let me show you one record from the collection.

That's quite a number of values for analysis! Which is a good sign for us - more values gives us more options to play with it.

As you may have realized by now, I have come to the final stage of building the model which is a traditional machine-learning task that is usually done in Python or R. So the final piece will be written in Python. You will find the entire code at 'driver_signature_build_model_scikit.py' in the 'machinelearning' directory.

Feature selection and elimination

As is common in any data-science project, one must first take a look at the data and determine if any features need to be eliminated. If some features do not make sense for the model we are building then those features need to be dropped. One quick observation is that fuel pressure and incline has nothing to do with driver signatures. So I will eliminate those values from any further consideration.

Specifically for this problem, you need do something special, which is a bit unusual, but required in this scenario.

If you look at the features carefully you will notice that some features are driver characteristics while others are vehicle characteristics. Thus it is important to not mix up the two sets. I have used my judgement to separate out the features into two sets as follows.

Having done this, now we need to build two different models - one to predict the driver and another one to predict the vehicle. It will be an interesting exercise to see which of these two models have better accuracy.

Reading directly from database instead of CSV

For completeness sake let me first give you two utility functions that are used to pull data out of the MongoDB database.

The function above is Pandas-friendly - it reads data from the MongoDB database and returns a Pandas data-frame so that you can get to work immediately with your machine-learning part.

In case you are not comfortable with MongoDB, I am giving you the entire dataset of the aggregated values in CSV format so that you can import it in any database you wish. The file is in GZIP format - so you need to unzip it before reading it. For those of you who are comfortable with MongoDB, here is the entire database dump.

Building a Machine Learning model

Now it is time to build the learning model. At program invocation two parameters are needed - the database host and which feature set to build the model for. This is handled in the code as follows:

Then I have some logic for setting the appropriate feature set within the application.

The following part is mostly boiler-plate code to break up the dataset into a training set, test set and validation set. While doing so all null values are set to zero as well.

Building a Random Forest Classifier and saving it

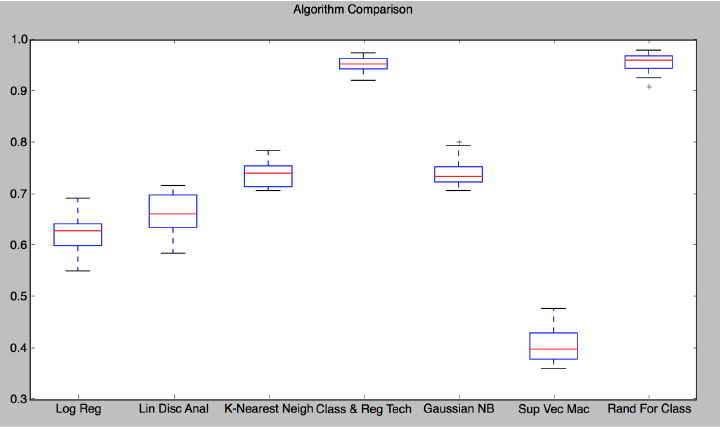

After trying out various different classifiers, with this dataset, it turns out that a Random Forest classifier gives the best accuracy. Here is the graph showing accuracy of the different classifiers used with this data set. The two best algorithms turn out to be Classification & Regression and Random Forest Classifier. I chose the Random Forest Classifier since this is an ensamble techique and will have better resilience.

This is what you need to do to build a Random Forest classifier with this dataset.

After building the model, I am saving it in a file (line 4) so that it can be read easily when doing the prediction. To find out how well the model is doing, we have to use the test set to make a prediction and evaluate the model score.

Many kinds of evalution metrics are calculated and printed in the above code segment. The most important one that I tend to look at is the overall model score, but the others will give you a good idea of the bias and variance which indicates how resilient your model is with respect to changing values.

Measure of importance

One interesting analysis is to figure out which of the features is the most impactful on the result. This can be done using the simple code fragment below:

Prediction results and Conclusion

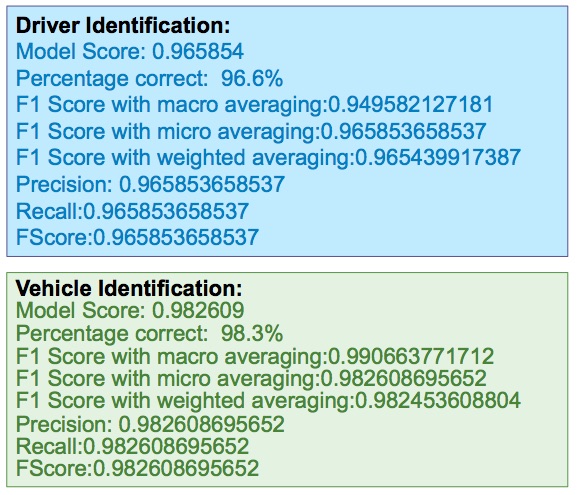

After running the two cases, namely driver prediction and vehicle prediciton, I am typically getting the following scores.

This is encouraging given that there was always an apprehension about the score not being accurate enough due to the low frequency of data collection. This is an important factor, since we are creating this model out of the instantaneous time derivatives of values, and a low sampling rate will introduce a significant error. The dataset has 13 different driver vehicle combinations. There isn't a whole lot of driving data other than the experiments that were done, but with an accuracy that is 95% or above, there may be some value in this approach.

Another interesting fact is that the vehicle prediction is coming out to be more accurate than the driver. In other words, the parameters being emitted by the car tend to characterize the car more heavily than the driver. Most drivers drive the same way, but the machine characteristics of the car tend to distinguish them more clearly.

Commercial Use Cases

I have showed you an example of many such applications that can be done with an approach like this. It just involves equipping your car with a smart device like a Raspberry Pi and the rest is all backend server-side work. Here are all the use-cases that I can think of. You can take up any of these as your own project and attempt to find a solution.

- Parking assistance

- Adaptive collision detection

- Video evidence recording

- Detect abusive driving

- Crash detection

- Theft detection

- Parking meter

- Mobile hot-spot

- Voice recognition

- Connect racing equipment

- Head Unit display

- Traffic sign warning

- Pattern of usage

- Reset fault codes

- Driver recognition (this is already demonstrated here!)

- Emergency braking alert

- Animal overheating protection

- Remote start

- Remote seatbelt notifications

- Radio volume regulation

- Auto radio off when window down

- Eco-driving optimization alerts

- Auto lock/unlock

Commercial product

After doing this experiment building a Raspberry Pi kit from scratch, I found out that there is a product called AutoPi that you can buy which will cut short a lot of the hardware setup. I have no affiliation with AutoPi, but I thought it is interesting that this subject is being treated quite seriously by some companies in Europe.

Driver Signatures from Car Diagnostic Data captured using a Raspberry Pi: Part 2 (Reading real-time data and uploading to the cloud)

This is the second article of the series to determine driver signatures from OBD data using a Raspberry Pi. In the first article I had described in detail how to construct your Raspberry Pi. Now let us write some code to read data from your car and put it to the test. In this second article I will describe the software needed to read data from your car's CAN bus, including some data captured from the GPS antenna attached to your Raspberry Pi, combine it into one packet and send it over to the cloud. I will show you the software setup for capturing data on the client (the Raspberry Pi), store it locally, compress that data on a periodic basis, encrypt it and send it to a cloud server. I will also show you the server setup you need on the cloud to receive the data coming in from the Raspberry Pi, decrypt it and store it in a database or push it to a messaging queue for streaming purposes. All work will be done in Python. My database of choice is MongoDB for this project.

Before you read this article, I would encourage you to read the first article of this series so that you know what hardware setup you need to reproduce this yourself.

Capturing OBD data locally

To begin with let us first see how we can capture data on the Raspberry Pi and save it locally. Since this is the first task that needs to be accomplished, let us figure out a way to capture data constantly and save it somewhere. Our data transmittal task is actually achieved using two processes.

- Capture data constantly and keep saving it to a local database.

- Periodically (once a minute in our case) summarize the data collected since the last successful run, and send it over to the cloud database.

Since we are going to execute a lot of code, I am only going to illustrate the salient features of the solution. A lot of the simpler programming nuances are left for you to figure out by looking at the code.

Did I say looking at the code? Where is it? Well, the entire code-base for this problem is in Github at https://github.com/anupambagchi/driver-signature-raspberry-pi You can clone this repository on your machine and go through the details. Note that I was successful in running this code only on a Raspberry Pi running Ubuntu Mate. I had some trouble installing the required module gps on a Mac, but it runs fine on a Raspberry Pi where it is supposed to run. Most of the modules required by the Python program can be obtained using the 'pip' command, e.g 'pip install crypto'. To get the gps module you need to do 'sudo apt-get install python-gps'.

Where to store the data on a Raspberry Pi?

Remember that the Raspberry Pi is a small device with small memory and possibly small disk space. You need to choose a database that is nimble but effective for this scenario. We do not need any multi-threading ability, nor do we need to store months worth of data. The database is mostly going to be used to collect transitional data that will shortly be compacted and sent over to the cloud database.

The universal database for this purpose is the in-built SQLite database that comes with every Linux installation. It is a file-based database - which means one has to specify a file when instantiating this database. Make a clone of the repository at the '/opt' directory on your Raspberry Pi.

The universal database for this purpose is the in-built SQLite database that comes with every Linux installation. It is a file-based database - which means one has to specify a file when instantiating this database. Make a clone of the repository at the '/opt' directory on your Raspberry Pi.

You will find a file called /opt/driver-signature-raspberry-pi/create_table_statements.sql and two other files with the extension '.db' which are your database files for running the job.

To initialize the database, you will need to run some initialization script. This is a one-time process on your Raspberry Pi. The SQL statements to set up the database tables are as follows:

To run it, you need to invoke the following:

This will create the necessary tables into the database file 'obd2data.db '.

Capturing OBD data

Now let us focus on capturing the OBD data. For this we make use of a popular Python library called pyobd which may be found at https://github.com/peterh/pyobd. There have been many forks of this library over the past 8 years or so. However my repository adds a lot to it - mainly for cloud processing and machine learning - so I decided not to call it a fork since the original purpose of the library has been altered a lot. I also modified the code to work well with Python 3.

The main program to read data from the OBD port and save it to a SQLite3 database may be found in 'obd_sqlite_recorder.py'. You can refer to this file under 'src' folder while you read the following.

To invoke this program you have to pass two parameters - the name of the user and a string representing the vehicle. For the latter I generally use a convention '<make>-<model>-<year>' for example 'gmc-denali-2015'. Let us now go through the salient features of the OBD scanner.

After doing some basic sanity tests, such as whether the program is running as superuser or not, and whether the appropriate number of parameters have been passed or not, the next step is to search the ports for GSM modem and initialize it.

Once this is done, we need to clean up anything that is 15 days or older so that the database does not grow any bigger. The expectation is that that data is too old and should have been transmitted to the cloud long ago, so we should clean it up to keep the database healthy.

Notice that we are opening up the database connection and executing a SQL statement to clean up and purge the data that is older than 15 days.

Next it is time to connect to the OBD port. Check if the connection can be established, and if not exit the program. Before you run this program, you need to use your Bluetooth settings on the desktop to connect to the ELM 327 device that should be alive and available for connection as soon as you turn the ignition switch on. This connection may be done manually by using the Linux Desktop UI or through a program that automatically does the connection as soon as the machine comes alive.

Notice that we first start the GPS poller. Then attempt to connect to the OBD recorder, and exit the program if unsuccessful.

Now that all connections have been checked, it is time to do the actual job of recording the readings.

A few lines of this code need explanation. The readings are stored in the variable 'results'. This is a dictionary that is first populated through a call to obd_recorder.get_obd_data() [Line 18]. This loops through all the required variables that we need to measure and goes through a loop to measure the values. This dictionary is then augmented with the DTC codes, if any codes are found [Line 22]. DTC stands for Diagnostic Troubleshooting Code and are codes set by the manufacturer to represent some error conditions inside the vehicle or engine. In lines 27-31, the results dictionary is augmented with the username, vehicle and mobile SIM card parameters. Finally in lines 34-41 we add the GPS readings.

So you see that each reading contains information from various sources - the CAN bus, SIM card, user-provided data and GPS signals.

When all data is gathered in the record, we save it in the database (Line 47-48) and commit the changes.

Uploading the data to the cloud

Note that all the data that has been saved so far has not left the machine - it is stored locally inside the machine. Now it is time to work on a mechanism to send it over to the cloud. This data must be

- summarized

- compressed

- encrypted

before we can upload it to our server. On the server side, that same record needs to be decrypted, uncompressed and then stored in a more persistent storage where one can do some BigData analysis. At the same time it needs to be streamed to a messaging queue to make it available for stream processing - mainly for alerting purposes.

Stability and Thread-safety

The driver for uploading data to the cloud is a cronjob that runs every minute. We could also write a program with an internal timer that runs like a daemon, but after a lot of experimentation - specially with large data-sets, I have realized that running an internal timer leads to instability over the long run. When a program runs for ever, it may build up some garbage in the heap over time and ultimately freezes. When a program is invoked through a cronjob, it wakes up, runs, does its job for that moment and exits. That way it always stays out of the way of the data collection program and keeps the machine healthy.

On the same lines, I also need to mention something about thread-safety pertaining to SQLite3. The new task that I am about to attempt is summarization of the data collected by the recorder. So I can technically use the same database that runs from this single file called obd2data.db - right? Not so fast. Because the recorder runs in an infinite loop and constantly writes data to this database, if you attempt to write another table to this same database, it runs into thread-safety issues and the table gets corrupted. I tried this initially, then realized that this was not a stable architecture when I saw it frozen or found data-corruption. So I had to alter it to write the summary to a different database - leaving the raw data database in read-only mode.

Data Compactor and Transmitter

To accomplish the task of transmitting the summarized data to the cloud, let us write a class that fulfils this task. You will find this is the file obd_transmitter.py.

The main loop that does the task is as follows:

There are three tasks - collect, transmit and cleanup. Let us take a look at each of these individually.

Collect and summarize

The following code will create packets of data for each minute, encrypt it, compress it and then transmit it. There are finer details in each of these steps that I am going to explain. But let's look at the code first.

To do some book-keeping (lines 2 to 13), I am keeping the last-processed Id in a separate table. Every time I successfully process a bunch of records, I save the last-processed Id in this table to pick up from during the next run. Remember, this is program is being triggered from a cronjob that runs every minute. You will find the cron description in the file crontab.txt under scripts directory.

Then we collect all the new records (lines 15 to 40) from the CAR_READINGS table and collect it in an array allRecords where each item is a rich document extracted from the JSON payload. One important point to note is that we do not include the current minute - since it may be incomplete. In lines 42 to 56 we are attempting to find out how many minutes have elapsed since the last time it was summarized and then pick up only those whole minutes which remain to be summarized and sent over. In Line 60 we are opening up a connection to a new database (stored in a different file - obd2summarydata.db) to store the summary data.

Lines 62 to 86 does the task of actually creating the summarized packet. Each packet has three fields - the time stamp (only minute, no seconds), the packet of all data collected during the minute, and the summary data (i.e aggregates over the minute). First this packet is created using a summarize function that I will describe later. Then this packet is encrypted using a randomly generated encryption key (Line 73) using AES encryption. Since the data packet size is non-uniform, we encrypt the packet using a randomly-generated key and then send the key over to the server in encrypted form to decrypt the packet. The encrypted packet is compressed (Line 78) to prepare it for transmission. The last step is to encrypt the transmission key itself so that it can also be sent over to the server in the same payload. We use PKS1 OAEP Encryption for this using a public key (encryption.key) stored on the server. The eventtime (whole minute), compressed/encrypted packet, encrypted key and the datasize is saved as a record in the table PROCESSED_READINGS (Line 86).

Note that when the packet is created you have a choice to only send the summarized data or the entire raw records along with the summarized data. It is obvious that if you want to save bandwidth you would do most of the "edge-processing" work in the Raspberry Pi itself and only send the summary record each time. However, in this experiment I wanted to do some additional work on the cloud - which was more granular than the once-a-minute scenario. As shown in part 3 of this series of articles, I actually do the summarization once every 15 seconds for driver signature analysis. So I needed to send all the raw data as well as the summary in my packet - there by increasing the bandwidth requirements. However the compression of data helped a lot is reducing the size of the original packet by almost 90%.

Data Aggregation

Let me now describe how the summarization is done. This is the "edge-computing" part of the entire process that is difficult to do within generic devices. Any IoT device (CalAmp for example) will be able to do most of the work pertaining to capturing OBD data and transmiting it to the cloud. But those devices perhaps are not capable enough to do the summarization - which is why one needs a more powerful computing machine like a Raspberry Pi to do the job. All I do for summarization is the following:

Look at line 56 of the previous block of code. You will see an array of items describing all the items that we need to summarize. This is in the variable summarizationItems. For each item in this list, we need to find the mean, median, mode, standard deviation, variance, maximum and minimum during each minute (Lines 11 to 17). The summarized items are appended to each record before it is saved to the summary database.

Transmitting the data to the cloud

To transmit the data over to the cloud you need to first set up an end-point. I am going to show you later how you can do that on the server. For now, let us assume that you already have that available. Then from the client side you can do the following to transmit the data:

The end-point (that I am going to show you later) will accept POST requests. But you also need to configure a load-balancer that just allows a connection from the outside world to inside the firewall. You must establish adequate security measures to ensure that your tunnel only exposes a certain port on the internal server.

Lines 1 to 7 set up the database connections to the summary database. In the table I am storing a flag "TRANSMITTED" that indicates if the record has been transmitted or not. For all records that have not been transmitted (Line 9) I am creating a payload comprising of size of packet, the encrypted key to use for decrypting the packet, the compressed/encrypted data packet and the eventtime (Line 17). Then this payload is POSTed to the end-point (Line 18). If the transmission is successful, the flag TRANSMITTED is set to true for this packet so that we do not attempt to send this again.

Cleanup

The cleanup operation is pretty simple. All I do is delete all records from the summary table that are more than 15 days old.

Server on the Cloud

As a final piece to this article let me describe how to set up the end-point of the server. There are many items that are needed to put it together. Surprisingly all of this is achieved in a relatively small amount of code - thanks to the crispness of the Python language.

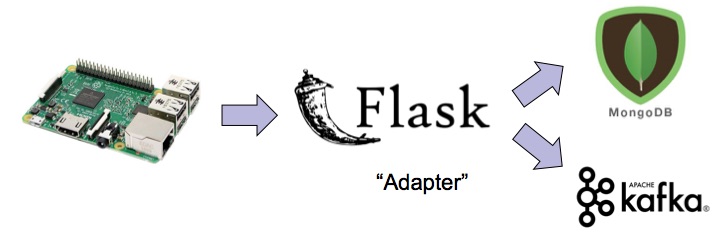

I decided to use MongoDB as persistent storage for records and Kafka as the messaging server for streaming. The following tasks are done in order in this function:

- Check for invalid usage of this web-service, and raise an exception if illegal (Line 1 to 3). A simple test is done to check for the existence of 'size' in the payload to ensure this.

- Decompress the packet (Line 9 to 10)

- Decrypt the transmission key using the private key (decryption.key) stored on the server. (Line 12 to 14)

- Decrypt the data packet (Line 19)

- Convert the JSON record to an internal Python dictionary for digging deeper into it (Line 21)

- Save the record in MongoDB (Line 27)

- Push the same record into a Kafka messaging queue (Lines 27 to 50)

This functionality is exposed as web-service using a Flask server. You will find the rest of the server code in file flaskserver.py in folder 'server'.

I have covered the salient features to put this together, skipping the other obvious pieces which you can peruse yourself by cloning the entire repository.

Conclusion

I know this has been a long post, but I needed to cover a lot of things. And we have not even started working on the data-science part. You may have heard that a data scientist spends 90% of the time in preparing data. Well, this task is even bigger - we had to set up the hardware and software to generate raw real-time data and store it in real-time to even start thinking about data science. If you are curious to see a sample of the collected data, you can find it here.

But now that this work is done, and we have taken special care that the generated data is in a nicely formatted form, the rest of the task should be easier. You will find the data science related stuff in the third and final episode of this series.

© Copyright 2024 Anupam Bagchi