This is the second article of the series to determine driver signatures from OBD data using a Raspberry Pi. In the first article I had described in detail how to construct your Raspberry Pi. Now let us write some code to read data from your car and put it to the test. In this second article I will describe the software needed to read data from your car's CAN bus, including some data captured from the GPS antenna attached to your Raspberry Pi, combine it into one packet and send it over to the cloud. I will show you the software setup for capturing data on the client (the Raspberry Pi), store it locally, compress that data on a periodic basis, encrypt it and send it to a cloud server. I will also show you the server setup you need on the cloud to receive the data coming in from the Raspberry Pi, decrypt it and store it in a database or push it to a messaging queue for streaming purposes. All work will be done in Python. My database of choice is MongoDB for this project.

Before you read this article, I would encourage you to read the first article of this series so that you know what hardware setup you need to reproduce this yourself.

Capturing OBD data locally

To begin with let us first see how we can capture data on the Raspberry Pi and save it locally. Since this is the first task that needs to be accomplished, let us figure out a way to capture data constantly and save it somewhere. Our data transmittal task is actually achieved using two processes.

- Capture data constantly and keep saving it to a local database.

- Periodically (once a minute in our case) summarize the data collected since the last successful run, and send it over to the cloud database.

Since we are going to execute a lot of code, I am only going to illustrate the salient features of the solution. A lot of the simpler programming nuances are left for you to figure out by looking at the code.

Did I say looking at the code? Where is it? Well, the entire code-base for this problem is in Github at https://github.com/anupambagchi/driver-signature-raspberry-pi You can clone this repository on your machine and go through the details. Note that I was successful in running this code only on a Raspberry Pi running Ubuntu Mate. I had some trouble installing the required module gps on a Mac, but it runs fine on a Raspberry Pi where it is supposed to run. Most of the modules required by the Python program can be obtained using the 'pip' command, e.g 'pip install crypto'. To get the gps module you need to do 'sudo apt-get install python-gps'.

Where to store the data on a Raspberry Pi?

Remember that the Raspberry Pi is a small device with small memory and possibly small disk space. You need to choose a database that is nimble but effective for this scenario. We do not need any multi-threading ability, nor do we need to store months worth of data. The database is mostly going to be used to collect transitional data that will shortly be compacted and sent over to the cloud database.

The universal database for this purpose is the in-built SQLite database that comes with every Linux installation. It is a file-based database - which means one has to specify a file when instantiating this database. Make a clone of the repository at the '/opt' directory on your Raspberry Pi.

The universal database for this purpose is the in-built SQLite database that comes with every Linux installation. It is a file-based database - which means one has to specify a file when instantiating this database. Make a clone of the repository at the '/opt' directory on your Raspberry Pi.

You will find a file called /opt/driver-signature-raspberry-pi/create_table_statements.sql and two other files with the extension '.db' which are your database files for running the job.

To initialize the database, you will need to run some initialization script. This is a one-time process on your Raspberry Pi. The SQL statements to set up the database tables are as follows:

CREATE TABLE CAR_READINGS(

ID INTEGER PRIMARY KEY NOT NULL,

EVENTTIME TEXT NOT NULL,

DEVICEDATA BLOB NOT NULL

);

CREATE TABLE LAST_PROCESSED(

TABLE_NAME TEXT NOT NULL,

LAST_PROCESSED_ID INTEGER NOT NULL

);

CREATE TABLE PROCESSED_READINGS(

ID INTEGER PRIMARY KEY NOT NULL,

EVENTTIME TEXT NOT NULL,

TRANSMITTED BOOLEAN DEFAULT FALSE,

DEVICEDATA BLOB NOT NULL,

ENCKEY BLOB NOT NULL,

DATASIZE INTEGER NOT NULL

);

CREATE TABLE CAR_READINGS(

ID INTEGER PRIMARY KEY NOT NULL,

EVENTTIME TEXT NOT NULL,

DEVICEDATA BLOB NOT NULL

);

CREATE TABLE LAST_PROCESSED(

TABLE_NAME TEXT NOT NULL,

LAST_PROCESSED_ID INTEGER NOT NULL

);

CREATE TABLE PROCESSED_READINGS(

ID INTEGER PRIMARY KEY NOT NULL,

EVENTTIME TEXT NOT NULL,

TRANSMITTED BOOLEAN DEFAULT FALSE,

DEVICEDATA BLOB NOT NULL,

ENCKEY BLOB NOT NULL,

DATASIZE INTEGER NOT NULL

);

To run it, you need to invoke the following:

$ sqlite3 obd2data.db < create_table_statements.sql

$ sqlite3 obd2data.db < create_table_statements.sql

This will create the necessary tables into the database file 'obd2data.db '.

Capturing OBD data

Now let us focus on capturing the OBD data. For this we make use of a popular Python library called pyobd which may be found at https://github.com/peterh/pyobd. There have been many forks of this library over the past 8 years or so. However my repository adds a lot to it - mainly for cloud processing and machine learning - so I decided not to call it a fork since the original purpose of the library has been altered a lot. I also modified the code to work well with Python 3.

The main program to read data from the OBD port and save it to a SQLite3 database may be found in 'obd_sqlite_recorder.py'. You can refer to this file under 'src' folder while you read the following.

To invoke this program you have to pass two parameters - the name of the user and a string representing the vehicle. For the latter I generally use a convention '<make>-<model>-<year>' for example 'gmc-denali-2015'. Let us now go through the salient features of the OBD scanner.

After doing some basic sanity tests, such as whether the program is running as superuser or not, and whether the appropriate number of parameters have been passed or not, the next step is to search the ports for GSM modem and initialize it.

allRFCommDevicePorts = scanRadioComm()

allUSBDevicePorts = scanUSBSerial()

print("RFPorts detected with devices on them: " + str(allRFCommDevicePorts))

print("USBPorts detected with devices on them: " + str(allUSBDevicePorts))

usbPortsIdentified = {}

iccid = '' # Default values are blank for those that come from GSM modem

imei = ''

for usbPort in allUSBDevicePorts:

try:

with time_limit(4):

print ("Trying to connect as GSM to " + str(usbPort))

gsm = GsmModem(port=usbPort, logger=GsmModem.debug_logger).boot()

print ("GSM modem detected at " + str(usbPort))

allUSBDevicePorts.remove(usbPort) # We just found it engaged, don't use it again

iccid = gsm.query("AT^ICCID?", "^ICCID:").strip('"')

imei = gsm.query("ATI", "IMEI:")

usbPortsIdentified[str(usbPort)] = "gsm"

print(usbPort, usbPortsIdentified[usbPort])

break # We got a port, so break out of loop

except TimeoutException:

# Maybe this is not the right port for the GSM modem, so skip to the next number

print ("Timed out!")

except IOError:

print ("IOError - so " + usbPort + " is also not a GSM device")

allRFCommDevicePorts = scanRadioComm()

allUSBDevicePorts = scanUSBSerial()

print("RFPorts detected with devices on them: " + str(allRFCommDevicePorts))

print("USBPorts detected with devices on them: " + str(allUSBDevicePorts))

usbPortsIdentified = {}

iccid = '' # Default values are blank for those that come from GSM modem

imei = ''

for usbPort in allUSBDevicePorts:

try:

with time_limit(4):

print ("Trying to connect as GSM to " + str(usbPort))

gsm = GsmModem(port=usbPort, logger=GsmModem.debug_logger).boot()

print ("GSM modem detected at " + str(usbPort))

allUSBDevicePorts.remove(usbPort) # We just found it engaged, don't use it again

iccid = gsm.query("AT^ICCID?", "^ICCID:").strip('"')

imei = gsm.query("ATI", "IMEI:")

usbPortsIdentified[str(usbPort)] = "gsm"

print(usbPort, usbPortsIdentified[usbPort])

break # We got a port, so break out of loop

except TimeoutException:

# Maybe this is not the right port for the GSM modem, so skip to the next number

print ("Timed out!")

except IOError:

print ("IOError - so " + usbPort + " is also not a GSM device")

Once this is done, we need to clean up anything that is 15 days or older so that the database does not grow any bigger. The expectation is that that data is too old and should have been transmitted to the cloud long ago, so we should clean it up to keep the database healthy.

# Open a SQLlite3 connection

dbconnection = sqlite3.connect('/opt/driver-signature-raspberry-pi/database/obd2data.db')

dbcursor = dbconnection.cursor()

# Do some cleanup as soon as you start. This is to prevent the database size from growing too big.

localtime = datetime.now()

delta = timedelta(days=15)

fifteendaysago = localtime - delta

fifteendaysago_str = fifteendaysago.isoformat()

dbcursor.execute('DELETE FROM CAR_READINGS WHERE EVENTTIME < ?', (fifteendaysago_str,))

dbconnection.commit()

dbcursor.execute('VACUUM CAR_READINGS')

dbconnection.commit()

# Open a SQLlite3 connection

dbconnection = sqlite3.connect('/opt/driver-signature-raspberry-pi/database/obd2data.db')

dbcursor = dbconnection.cursor()

# Do some cleanup as soon as you start. This is to prevent the database size from growing too big.

localtime = datetime.now()

delta = timedelta(days=15)

fifteendaysago = localtime - delta

fifteendaysago_str = fifteendaysago.isoformat()

dbcursor.execute('DELETE FROM CAR_READINGS WHERE EVENTTIME < ?', (fifteendaysago_str,))

dbconnection.commit()

dbcursor.execute('VACUUM CAR_READINGS')

dbconnection.commit()

Notice that we are opening up the database connection and executing a SQL statement to clean up and purge the data that is older than 15 days.

Next it is time to connect to the OBD port. Check if the connection can be established, and if not exit the program. Before you run this program, you need to use your Bluetooth settings on the desktop to connect to the ELM 327 device that should be alive and available for connection as soon as you turn the ignition switch on. This connection may be done manually by using the Linux Desktop UI or through a program that automatically does the connection as soon as the machine comes alive.

gps_poller.start() # start it up

logitems_full = ["dtc_status", "dtc_ff", "fuel_status", "load", "temp", "short_term_fuel_trim_1",

"long_term_fuel_trim_1", "short_term_fuel_trim_2", "long_term_fuel_trim_2",

"fuel_pressure", "manifold_pressure", "rpm", "speed", "timing_advance", "intake_air_temp",

"maf", "throttle_pos", "secondary_air_status", "o211", "o212", "obd_standard",

"o2_sensor_position_b", "aux_input", "engine_time", "abs_load", "rel_throttle_pos",

"ambient_air_temp", "abs_throttle_pos_b", "acc_pedal_pos_d", "acc_pedal_pos_e",

"comm_throttle_ac", "rel_acc_pedal_pos", "eng_fuel_rate", "drv_demand_eng_torq",

"act_eng_torq", "eng_ref_torq"]

# Initialize the OBD recorder

obd_recorder = OBD_Recorder(logitems_full)

need_to_exit = False

try:

obd_recorder.connect(allRFCommDevicePorts + allUSBDevicePorts)

except:

exc_type, exc_value, exc_traceback = sys.exc_info()

traceback.print_tb(exc_traceback, limit=1, file=sys.stdout)

print ("Unable to connect to OBD port. Exiting...")

need_to_exit = True

if not obd_recorder.is_connected():

print ("OBD device is not connected. Exiting.")

need_to_exit = True

if need_to_exit:

os._exit(-1)

gps_poller.start() # start it up

logitems_full = ["dtc_status", "dtc_ff", "fuel_status", "load", "temp", "short_term_fuel_trim_1",

"long_term_fuel_trim_1", "short_term_fuel_trim_2", "long_term_fuel_trim_2",

"fuel_pressure", "manifold_pressure", "rpm", "speed", "timing_advance", "intake_air_temp",

"maf", "throttle_pos", "secondary_air_status", "o211", "o212", "obd_standard",

"o2_sensor_position_b", "aux_input", "engine_time", "abs_load", "rel_throttle_pos",

"ambient_air_temp", "abs_throttle_pos_b", "acc_pedal_pos_d", "acc_pedal_pos_e",

"comm_throttle_ac", "rel_acc_pedal_pos", "eng_fuel_rate", "drv_demand_eng_torq",

"act_eng_torq", "eng_ref_torq"]

# Initialize the OBD recorder

obd_recorder = OBD_Recorder(logitems_full)

need_to_exit = False

try:

obd_recorder.connect(allRFCommDevicePorts + allUSBDevicePorts)

except:

exc_type, exc_value, exc_traceback = sys.exc_info()

traceback.print_tb(exc_traceback, limit=1, file=sys.stdout)

print ("Unable to connect to OBD port. Exiting...")

need_to_exit = True

if not obd_recorder.is_connected():

print ("OBD device is not connected. Exiting.")

need_to_exit = True

if need_to_exit:

os._exit(-1)

Notice that we first start the GPS poller. Then attempt to connect to the OBD recorder, and exit the program if unsuccessful.

Now that all connections have been checked, it is time to do the actual job of recording the readings.

# Everything looks good - so start recording

print ("Database logging started...")

print ("Ids of records inserted will be printed on screen.")

lastminute = -1

need_to_exit = False

while True:

# It may take a second or two to get good data

# print gpsd.fix.latitude,', ',gpsd.fix.longitude,' Time: ',gpsd.utc

if need_to_exit:

os._exit(-1)

if (obd_recorder.port is None):

print("Your OBD port has not been set correctly, found None.")

sys.exit(-1)

localtime = datetime.now()

results = obd_recorder.get_obd_data()

currentminute = localtime.minute

if currentminute != lastminute:

dtc_codes = obd_recorder.get_dtc_codes()

print ('DTC=', str(dtc_codes))

results["dtc_code"] = dtc_codes

lastminute = currentminute

results["username"] = username

results["vehicle"] = vehicle

results["eventtime"] = datetime.utcnow().isoformat()

results["iccid"] = iccid

results["imei"] = imei

loc = {}

loc["type"] = "Point"

loc["coordinates"] = [gpsd.fix.longitude, gpsd.fix.latitude]

results["location"] = loc

results["heading"] = gpsd.fix.track

results["altitude"] = gpsd.fix.altitude

results["climb"] = gpsd.fix.climb

results["gps_speed"] = gpsd.fix.speed

results["heading"] = gpsd.fix.track

results_str = json.dumps(results)

# print(results_str)

# Insert a row of data

dbcursor.execute('INSERT INTO CAR_READINGS(EVENTTIME, DEVICEDATA) VALUES (?,?)',

(results["eventtime"], results_str))

# Save (commit) the changes

dbconnection.commit()

post_id = dbcursor.lastrowid

print(post_id)

except (KeyboardInterrupt, SystemExit, SyntaxError): # when you press ctrl+c

print ("Manual intervention Killing Thread..." + sys.exc_info()[0])

need_to_exit = True

except serial.serialutil.SerialException:

print("Serial connection error detected - OBD device may not be communicating.

Exiting." + sys.exc_info()[0])

need_to_exit = True

except IOError:

print("Input/Output error detected. Exiting." + sys.exc_info()[0])

need_to_exit = True

except:

print("Unexpected exception encountered. Exiting." + sys.exc_info()[0])

need_to_exit = True

finally:

exc_type, exc_value, exc_traceback = sys.exc_info()

traceback.print_tb(exc_traceback, limit=1, file=sys.stdout)

print(sys.exc_info()[1])

gps_poller.running = False

gps_poller.join() # wait for the thread to finish what it's doing

dbconnection.close()

print ("Done.\nExiting.")

sys.exit(0)

# Everything looks good - so start recording

print ("Database logging started...")

print ("Ids of records inserted will be printed on screen.")

lastminute = -1

need_to_exit = False

while True:

# It may take a second or two to get good data

# print gpsd.fix.latitude,', ',gpsd.fix.longitude,' Time: ',gpsd.utc

if need_to_exit:

os._exit(-1)

if (obd_recorder.port is None):

print("Your OBD port has not been set correctly, found None.")

sys.exit(-1)

localtime = datetime.now()

results = obd_recorder.get_obd_data()

currentminute = localtime.minute

if currentminute != lastminute:

dtc_codes = obd_recorder.get_dtc_codes()

print ('DTC=', str(dtc_codes))

results["dtc_code"] = dtc_codes

lastminute = currentminute

results["username"] = username

results["vehicle"] = vehicle

results["eventtime"] = datetime.utcnow().isoformat()

results["iccid"] = iccid

results["imei"] = imei

loc = {}

loc["type"] = "Point"

loc["coordinates"] = [gpsd.fix.longitude, gpsd.fix.latitude]

results["location"] = loc

results["heading"] = gpsd.fix.track

results["altitude"] = gpsd.fix.altitude

results["climb"] = gpsd.fix.climb

results["gps_speed"] = gpsd.fix.speed

results["heading"] = gpsd.fix.track

results_str = json.dumps(results)

# print(results_str)

# Insert a row of data

dbcursor.execute('INSERT INTO CAR_READINGS(EVENTTIME, DEVICEDATA) VALUES (?,?)',

(results["eventtime"], results_str))

# Save (commit) the changes

dbconnection.commit()

post_id = dbcursor.lastrowid

print(post_id)

except (KeyboardInterrupt, SystemExit, SyntaxError): # when you press ctrl+c

print ("Manual intervention Killing Thread..." + sys.exc_info()[0])

need_to_exit = True

except serial.serialutil.SerialException:

print("Serial connection error detected - OBD device may not be communicating.

Exiting." + sys.exc_info()[0])

need_to_exit = True

except IOError:

print("Input/Output error detected. Exiting." + sys.exc_info()[0])

need_to_exit = True

except:

print("Unexpected exception encountered. Exiting." + sys.exc_info()[0])

need_to_exit = True

finally:

exc_type, exc_value, exc_traceback = sys.exc_info()

traceback.print_tb(exc_traceback, limit=1, file=sys.stdout)

print(sys.exc_info()[1])

gps_poller.running = False

gps_poller.join() # wait for the thread to finish what it's doing

dbconnection.close()

print ("Done.\nExiting.")

sys.exit(0)

A few lines of this code need explanation. The readings are stored in the variable 'results'. This is a dictionary that is first populated through a call to obd_recorder.get_obd_data() [Line 18]. This loops through all the required variables that we need to measure and goes through a loop to measure the values. This dictionary is then augmented with the DTC codes, if any codes are found [Line 22]. DTC stands for Diagnostic Troubleshooting Code and are codes set by the manufacturer to represent some error conditions inside the vehicle or engine. In lines 27-31, the results dictionary is augmented with the username, vehicle and mobile SIM card parameters. Finally in lines 34-41 we add the GPS readings.

So you see that each reading contains information from various sources - the CAN bus, SIM card, user-provided data and GPS signals.

When all data is gathered in the record, we save it in the database (Line 47-48) and commit the changes.

Uploading the data to the cloud

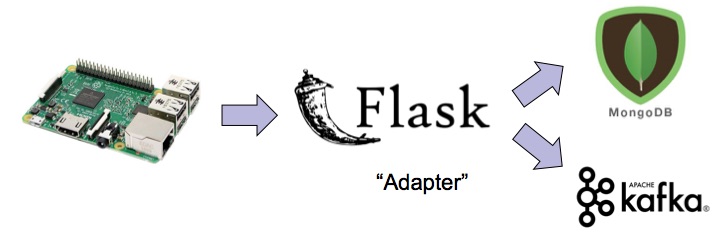

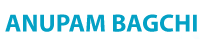

Note that all the data that has been saved so far has not left the machine - it is stored locally inside the machine. Now it is time to work on a mechanism to send it over to the cloud. This data must be

- summarized

- compressed

- encrypted

before we can upload it to our server. On the server side, that same record needs to be decrypted, uncompressed and then stored in a more persistent storage where one can do some BigData analysis. At the same time it needs to be streamed to a messaging queue to make it available for stream processing - mainly for alerting purposes.

Stability and Thread-safety

The driver for uploading data to the cloud is a cronjob that runs every minute. We could also write a program with an internal timer that runs like a daemon, but after a lot of experimentation - specially with large data-sets, I have realized that running an internal timer leads to instability over the long run. When a program runs for ever, it may build up some garbage in the heap over time and ultimately freezes. When a program is invoked through a cronjob, it wakes up, runs, does its job for that moment and exits. That way it always stays out of the way of the data collection program and keeps the machine healthy.

On the same lines, I also need to mention something about thread-safety pertaining to SQLite3. The new task that I am about to attempt is summarization of the data collected by the recorder. So I can technically use the same database that runs from this single file called obd2data.db - right? Not so fast. Because the recorder runs in an infinite loop and constantly writes data to this database, if you attempt to write another table to this same database, it runs into thread-safety issues and the table gets corrupted. I tried this initially, then realized that this was not a stable architecture when I saw it frozen or found data-corruption. So I had to alter it to write the summary to a different database - leaving the raw data database in read-only mode.

Data Compactor and Transmitter

To accomplish the task of transmitting the summarized data to the cloud, let us write a class that fulfils this task. You will find this is the file obd_transmitter.py.

The main loop that does the task is as follows:

DataCompactor.collect()

# We do not want the server to be pounded with requests all at the same time

# So we have a random wait time to distribute it over the next 30 seconds.

# This brings the max wait time per minute to be 40 seconds, which is still 20 seconds to do the job (summarize + transmit).

waitminutes = randint(0, 30)

if with_wait_time:

time.sleep(waitminutes)

DataCompactor.transmit()

DataCompactor.cleanup()

DataCompactor.collect()

# We do not want the server to be pounded with requests all at the same time

# So we have a random wait time to distribute it over the next 30 seconds.

# This brings the max wait time per minute to be 40 seconds, which is still 20 seconds to do the job (summarize + transmit).

waitminutes = randint(0, 30)

if with_wait_time:

time.sleep(waitminutes)

DataCompactor.transmit()

DataCompactor.cleanup()

There are three tasks - collect, transmit and cleanup. Let us take a look at each of these individually.

Collect and summarize

The following code will create packets of data for each minute, encrypt it, compress it and then transmit it. There are finer details in each of these steps that I am going to explain. But let's look at the code first.

# First find out the id of the record that was included in the last compaction task

dbconnection = sqlite3.connect('/opt/driver-signature-raspberry-pi/database/obd2data.db')

dbcursor = dbconnection.cursor()

last_id_found = dbcursor.execute('SELECT LAST_PROCESSED_ID FROM LAST_PROCESSED WHERE TABLE_NAME = "CAR_READINGS" LIMIT 1')

lastId = 0

try:

first_row = next(last_id_found)

for row in chain((first_row,), last_id_found):

pass # do something

lastId = row[0]

except StopIteration as e:

pass # 0 results

# Collect data till the last minute last second, but not including the current minute

nowTime = datetime.utcnow().isoformat() # Example: 2017-05-14T19:51:29.071710 in ISO 8601 extended format

# nowTime = '2017-05-14T19:54:58.398073' # for testing

timeTillLastMinuteStr = nowTime[:17] + "00.000000"

# timeTillLastMinute = dateutil.parser.parse(timeTillLastMinuteStr) # ISO 8601 extended format

dbcursor.execute('SELECT * FROM CAR_READINGS WHERE ID > ? AND EVENTTIME <= ?', (lastId,timeTillLastMinuteStr))

allRecords = []

finalId = lastId

for row in dbcursor:

record = row[2]

allRecords.append(json.loads(record))

finalId = row[0]

if lastId == 0:

# print("Inserting")

dbcursor.execute('INSERT INTO LAST_PROCESSED (TABLE_NAME, LAST_PROCESSED_ID) VALUES (?,?)', ("CAR_READINGS", finalId))

else:

# print("Updating")

dbcursor.execute('UPDATE LAST_PROCESSED SET LAST_PROCESSED_ID = ? WHERE TABLE_NAME = "CAR_READINGS"', (finalId,))

#print allRecords

dbconnection.commit() # Save (commit) the changes

dbconnection.close() # And close it before exiting

print("Collecting all records till %s comprising IDs from %d to %d ..." % (timeTillLastMinuteStr, lastId, finalId))

encryptionKeyHandle = open('encryption.key', 'r')

encryptionKey = RSA.importKey(encryptionKeyHandle.read())

encryptionKeyHandle.close()

# From here we need to break down the data into chunks of each minute and store one record for each minute

minutePackets = {}

for record in allRecords:

eventTimeByMinute = record["eventtime"][:17] + "00.000000"

if eventTimeByMinute in minutePackets:

minutePackets[eventTimeByMinute].append(record)

else:

minutePackets[eventTimeByMinute] = [record]

# print (minutePackets)

summarizationItems = ['load', 'rpm', 'timing_advance', 'speed', 'altitude', 'gear', 'intake_air_temp',

'gps_speed', 'short_term_fuel_trim_2', 'o212', 'short_term_fuel_trim_1', 'maf',

'throttle_pos', 'climb', 'temp', 'long_term_fuel_trim_1', 'heading', 'long_term_fuel_trim_2']

dbconnection = sqlite3.connect('/opt/driver-signature-raspberry-pi/database/obd2summarydata.db')

dbcursor = dbconnection.cursor()

for minuteStamp in minutePackets:

minutePack = minutePackets[minuteStamp]

packet = {}

packet["timestamp"] = minuteStamp

packet["data"] = minutePack

packet["summary"] = DataCompactor.summarize(minutePack, summarizationItems)

packetStr = json.dumps(packet)

# Create an AES encryptor

aesCipherForEncryption = AESCipher()

symmetricKey = Random.get_random_bytes(32) # generate a random key

aesCipherForEncryption.setKey(symmetricKey) # and set it within the encryptor

encryptedPacketStr = aesCipherForEncryption.encrypt(packetStr)

# Compress the packet

compressedPacket = base64.b64encode(zlib.compress(encryptedPacketStr)) # Can be transmitted

dataSize = len(packetStr)

# Now do asymmetric encryption of the key using PKS1_OAEP

pks1OAEPForEncryption = PKS1_OAEPCipher()

pks1OAEPForEncryption.readEncryptionKey('encryption.key')

symmetricKeyEncrypted = base64.b64encode(pks1OAEPForEncryption.encrypt(symmetricKey)) # Can be transmitted

dbcursor.execute('INSERT INTO PROCESSED_READINGS(EVENTTIME, DEVICEDATA, ENCKEY, DATASIZE) VALUES (?,?,?,?)',

(minuteStamp, compressedPacket, symmetricKeyEncrypted, dataSize))

# Save this list to another table

dbconnection.commit() # Save (commit) the changes

dbconnection.close() # And close it before exiting

# First find out the id of the record that was included in the last compaction task

dbconnection = sqlite3.connect('/opt/driver-signature-raspberry-pi/database/obd2data.db')

dbcursor = dbconnection.cursor()

last_id_found = dbcursor.execute('SELECT LAST_PROCESSED_ID FROM LAST_PROCESSED WHERE TABLE_NAME = "CAR_READINGS" LIMIT 1')

lastId = 0

try:

first_row = next(last_id_found)

for row in chain((first_row,), last_id_found):

pass # do something

lastId = row[0]

except StopIteration as e:

pass # 0 results

# Collect data till the last minute last second, but not including the current minute

nowTime = datetime.utcnow().isoformat() # Example: 2017-05-14T19:51:29.071710 in ISO 8601 extended format

# nowTime = '2017-05-14T19:54:58.398073' # for testing

timeTillLastMinuteStr = nowTime[:17] + "00.000000"

# timeTillLastMinute = dateutil.parser.parse(timeTillLastMinuteStr) # ISO 8601 extended format

dbcursor.execute('SELECT * FROM CAR_READINGS WHERE ID > ? AND EVENTTIME <= ?', (lastId,timeTillLastMinuteStr))

allRecords = []

finalId = lastId

for row in dbcursor:

record = row[2]

allRecords.append(json.loads(record))

finalId = row[0]

if lastId == 0:

# print("Inserting")

dbcursor.execute('INSERT INTO LAST_PROCESSED (TABLE_NAME, LAST_PROCESSED_ID) VALUES (?,?)', ("CAR_READINGS", finalId))

else:

# print("Updating")

dbcursor.execute('UPDATE LAST_PROCESSED SET LAST_PROCESSED_ID = ? WHERE TABLE_NAME = "CAR_READINGS"', (finalId,))

#print allRecords

dbconnection.commit() # Save (commit) the changes

dbconnection.close() # And close it before exiting

print("Collecting all records till %s comprising IDs from %d to %d ..." % (timeTillLastMinuteStr, lastId, finalId))

encryptionKeyHandle = open('encryption.key', 'r')

encryptionKey = RSA.importKey(encryptionKeyHandle.read())

encryptionKeyHandle.close()

# From here we need to break down the data into chunks of each minute and store one record for each minute

minutePackets = {}

for record in allRecords:

eventTimeByMinute = record["eventtime"][:17] + "00.000000"

if eventTimeByMinute in minutePackets:

minutePackets[eventTimeByMinute].append(record)

else:

minutePackets[eventTimeByMinute] = [record]

# print (minutePackets)

summarizationItems = ['load', 'rpm', 'timing_advance', 'speed', 'altitude', 'gear', 'intake_air_temp',

'gps_speed', 'short_term_fuel_trim_2', 'o212', 'short_term_fuel_trim_1', 'maf',

'throttle_pos', 'climb', 'temp', 'long_term_fuel_trim_1', 'heading', 'long_term_fuel_trim_2']

dbconnection = sqlite3.connect('/opt/driver-signature-raspberry-pi/database/obd2summarydata.db')

dbcursor = dbconnection.cursor()

for minuteStamp in minutePackets:

minutePack = minutePackets[minuteStamp]

packet = {}

packet["timestamp"] = minuteStamp

packet["data"] = minutePack

packet["summary"] = DataCompactor.summarize(minutePack, summarizationItems)

packetStr = json.dumps(packet)

# Create an AES encryptor

aesCipherForEncryption = AESCipher()

symmetricKey = Random.get_random_bytes(32) # generate a random key

aesCipherForEncryption.setKey(symmetricKey) # and set it within the encryptor

encryptedPacketStr = aesCipherForEncryption.encrypt(packetStr)

# Compress the packet

compressedPacket = base64.b64encode(zlib.compress(encryptedPacketStr)) # Can be transmitted

dataSize = len(packetStr)

# Now do asymmetric encryption of the key using PKS1_OAEP

pks1OAEPForEncryption = PKS1_OAEPCipher()

pks1OAEPForEncryption.readEncryptionKey('encryption.key')

symmetricKeyEncrypted = base64.b64encode(pks1OAEPForEncryption.encrypt(symmetricKey)) # Can be transmitted

dbcursor.execute('INSERT INTO PROCESSED_READINGS(EVENTTIME, DEVICEDATA, ENCKEY, DATASIZE) VALUES (?,?,?,?)',

(minuteStamp, compressedPacket, symmetricKeyEncrypted, dataSize))

# Save this list to another table

dbconnection.commit() # Save (commit) the changes

dbconnection.close() # And close it before exiting

To do some book-keeping (lines 2 to 13), I am keeping the last-processed Id in a separate table. Every time I successfully process a bunch of records, I save the last-processed Id in this table to pick up from during the next run. Remember, this is program is being triggered from a cronjob that runs every minute. You will find the cron description in the file crontab.txt under scripts directory.

Then we collect all the new records (lines 15 to 40) from the CAR_READINGS table and collect it in an array allRecords where each item is a rich document extracted from the JSON payload. One important point to note is that we do not include the current minute - since it may be incomplete. In lines 42 to 56 we are attempting to find out how many minutes have elapsed since the last time it was summarized and then pick up only those whole minutes which remain to be summarized and sent over. In Line 60 we are opening up a connection to a new database (stored in a different file - obd2summarydata.db) to store the summary data.

Lines 62 to 86 does the task of actually creating the summarized packet. Each packet has three fields - the time stamp (only minute, no seconds), the packet of all data collected during the minute, and the summary data (i.e aggregates over the minute). First this packet is created using a summarize function that I will describe later. Then this packet is encrypted using a randomly generated encryption key (Line 73) using AES encryption. Since the data packet size is non-uniform, we encrypt the packet using a randomly-generated key and then send the key over to the server in encrypted form to decrypt the packet. The encrypted packet is compressed (Line 78) to prepare it for transmission. The last step is to encrypt the transmission key itself so that it can also be sent over to the server in the same payload. We use PKS1 OAEP Encryption for this using a public key (encryption.key) stored on the server. The eventtime (whole minute), compressed/encrypted packet, encrypted key and the datasize is saved as a record in the table PROCESSED_READINGS (Line 86).

Note that when the packet is created you have a choice to only send the summarized data or the entire raw records along with the summarized data. It is obvious that if you want to save bandwidth you would do most of the "edge-processing" work in the Raspberry Pi itself and only send the summary record each time. However, in this experiment I wanted to do some additional work on the cloud - which was more granular than the once-a-minute scenario. As shown in part 3 of this series of articles, I actually do the summarization once every 15 seconds for driver signature analysis. So I needed to send all the raw data as well as the summary in my packet - there by increasing the bandwidth requirements. However the compression of data helped a lot is reducing the size of the original packet by almost 90%.

Data Aggregation

Let me now describe how the summarization is done. This is the "edge-computing" part of the entire process that is difficult to do within generic devices. Any IoT device (CalAmp for example) will be able to do most of the work pertaining to capturing OBD data and transmiting it to the cloud. But those devices perhaps are not capable enough to do the summarization - which is why one needs a more powerful computing machine like a Raspberry Pi to do the job. All I do for summarization is the following:

summary = {}

for item in items:

summaryItem = {}

itemarray = []

for reading in readings:

if isinstance(reading[item], (float, int)):

itemarray.append(reading[item])

# print(itemarray)

summaryItem["count"] = len(itemarray)

if len(itemarray) > 0:

summaryItem["mean"] = numpy.mean(itemarray)

summaryItem["median"] = numpy.median(itemarray)

summaryItem["mode"] = stats.mode(itemarray)[0][0]

summaryItem["stdev"] = numpy.std(itemarray)

summaryItem["variance"] = numpy.var(itemarray)

summaryItem["max"] = numpy.max(itemarray)

summaryItem["min"] = numpy.min(itemarray)

summary[item] = summaryItem

return summary

summary = {}

for item in items:

summaryItem = {}

itemarray = []

for reading in readings:

if isinstance(reading[item], (float, int)):

itemarray.append(reading[item])

# print(itemarray)

summaryItem["count"] = len(itemarray)

if len(itemarray) > 0:

summaryItem["mean"] = numpy.mean(itemarray)

summaryItem["median"] = numpy.median(itemarray)

summaryItem["mode"] = stats.mode(itemarray)[0][0]

summaryItem["stdev"] = numpy.std(itemarray)

summaryItem["variance"] = numpy.var(itemarray)

summaryItem["max"] = numpy.max(itemarray)

summaryItem["min"] = numpy.min(itemarray)

summary[item] = summaryItem

return summary

Look at line 56 of the previous block of code. You will see an array of items describing all the items that we need to summarize. This is in the variable summarizationItems. For each item in this list, we need to find the mean, median, mode, standard deviation, variance, maximum and minimum during each minute (Lines 11 to 17). The summarized items are appended to each record before it is saved to the summary database.

Transmitting the data to the cloud

To transmit the data over to the cloud you need to first set up an end-point. I am going to show you later how you can do that on the server. For now, let us assume that you already have that available. Then from the client side you can do the following to transmit the data:

base_url = "http://OBD-EDGE-DATA-CATCHER-43340034802.us-west-2.elb.amazonaws.com" # for accessing it from outside the firewall

url = base_url + "/obd2/api/v1/17350/upload"

dbconnection = sqlite3.connect('/opt/driver-signature-raspberry-pi/database/obd2summarydata.db')

dbcursor = dbconnection.cursor()

dbupdatecursor = dbconnection.cursor()

dbcursor.execute('SELECT ID, EVENTTIME, TRANSMITTED, DEVICEDATA, ENCKEY, DATASIZE FROM PROCESSED_READINGS WHERE TRANSMITTED="FALSE" ORDER BY EVENTTIME')

for row in dbcursor:

rowid = row[0]

eventtime = row[1]

devicedata = row[3]

enckey = row[4]

datasize = row[5]

payload = {'size': str(datasize), 'key': enckey, 'data': devicedata, 'eventtime': eventtime}

response = requests.post(url, json=payload)

#print(response.text) # TEXT/HTML

#print(response.status_code, response.reason) # HTTP

if response.status_code == 201:

dbupdatecursor.execute('UPDATE PROCESSED_READINGS SET TRANSMITTED="TRUE" WHERE ID = ?', (rowid,))

dbconnection.commit() # Save (commit) the changes

dbconnection.commit() # Save (commit) the changes

dbconnection.close() # And close it before exiting

base_url = "http://OBD-EDGE-DATA-CATCHER-43340034802.us-west-2.elb.amazonaws.com" # for accessing it from outside the firewall

url = base_url + "/obd2/api/v1/17350/upload"

dbconnection = sqlite3.connect('/opt/driver-signature-raspberry-pi/database/obd2summarydata.db')

dbcursor = dbconnection.cursor()

dbupdatecursor = dbconnection.cursor()

dbcursor.execute('SELECT ID, EVENTTIME, TRANSMITTED, DEVICEDATA, ENCKEY, DATASIZE FROM PROCESSED_READINGS WHERE TRANSMITTED="FALSE" ORDER BY EVENTTIME')

for row in dbcursor:

rowid = row[0]

eventtime = row[1]

devicedata = row[3]

enckey = row[4]

datasize = row[5]

payload = {'size': str(datasize), 'key': enckey, 'data': devicedata, 'eventtime': eventtime}

response = requests.post(url, json=payload)

#print(response.text) # TEXT/HTML

#print(response.status_code, response.reason) # HTTP

if response.status_code == 201:

dbupdatecursor.execute('UPDATE PROCESSED_READINGS SET TRANSMITTED="TRUE" WHERE ID = ?', (rowid,))

dbconnection.commit() # Save (commit) the changes

dbconnection.commit() # Save (commit) the changes

dbconnection.close() # And close it before exiting

The end-point (that I am going to show you later) will accept POST requests. But you also need to configure a load-balancer that just allows a connection from the outside world to inside the firewall. You must establish adequate security measures to ensure that your tunnel only exposes a certain port on the internal server.

Lines 1 to 7 set up the database connections to the summary database. In the table I am storing a flag "TRANSMITTED" that indicates if the record has been transmitted or not. For all records that have not been transmitted (Line 9) I am creating a payload comprising of size of packet, the encrypted key to use for decrypting the packet, the compressed/encrypted data packet and the eventtime (Line 17). Then this payload is POSTed to the end-point (Line 18). If the transmission is successful, the flag TRANSMITTED is set to true for this packet so that we do not attempt to send this again.

Cleanup

The cleanup operation is pretty simple. All I do is delete all records from the summary table that are more than 15 days old.

localtime = datetime.now()

if int(localtime.isoformat()[14:16]) == 0:

delta = timedelta(days=15)

fifteendaysago = localtime - delta

fifteendaysago_str = fifteendaysago.isoformat()

dbconnection = sqlite3.connect('/opt/driver-signature-raspberry-pi/database/obd2summarydata.db')

dbcursor = dbconnection.cursor()

dbcursor.execute('DELETE FROM PROCESSED_READINGS WHERE EVENTTIME < ?', (fifteendaysago_str,))

dbconnection.commit()

dbcursor.execute('VACUUM PROCESSED_READINGS')

dbconnection.commit() # Save (commit) the changes

dbconnection.close() # And close it before exiting

localtime = datetime.now()

if int(localtime.isoformat()[14:16]) == 0:

delta = timedelta(days=15)

fifteendaysago = localtime - delta

fifteendaysago_str = fifteendaysago.isoformat()

dbconnection = sqlite3.connect('/opt/driver-signature-raspberry-pi/database/obd2summarydata.db')

dbcursor = dbconnection.cursor()

dbcursor.execute('DELETE FROM PROCESSED_READINGS WHERE EVENTTIME < ?', (fifteendaysago_str,))

dbconnection.commit()

dbcursor.execute('VACUUM PROCESSED_READINGS')

dbconnection.commit() # Save (commit) the changes

dbconnection.close() # And close it before exiting

Server on the Cloud

As a final piece to this article let me describe how to set up the end-point of the server. There are many items that are needed to put it together. Surprisingly all of this is achieved in a relatively small amount of code - thanks to the crispness of the Python language.

print(request.content_type)

if not request.json or not 'size' in request.json:

raise InvalidUsage('Invalid usage of this web-service detected', status_code=400)

size = int(request.json['size'])

decoded_compressed_record = request.json.get('data', "")

symmetricKeyEncrypted = request.json.get('key', "")

compressed_record = base64.b64decode(decoded_compressed_record)

encrypted_json_record_str = zlib.decompress(compressed_record)

pks1OAEPForDecryption = PKS1_OAEPCipher()

pks1OAEPForDecryption.readDecryptionKey('decryption.key')

symmetricKeyDecrypted = pks1OAEPForDecryption.decrypt(base64.b64decode(symmetricKeyEncrypted))

aesCipherForDecryption = AESCipher()

aesCipherForDecryption.setKey(symmetricKeyDecrypted)

json_record_str = aesCipherForDecryption.decrypt(encrypted_json_record_str)

record_as_dict = json.loads(json_record_str)

# Add the account ID to the reading here

record_as_dict["account"] = account

#print record_as_dict

post_id = mongo_collection.insert_one(record_as_dict).inserted_id

print('Saved as Id: %s' % post_id)

producer = KafkaProducer(bootstrap_servers=['your.kafka.server.com:9092'],

value_serializer=lambda m: json.dumps(m).encode('ascii'),

retries=5)

# send the individual records to the Kafka queue for stream processing

raw_readings = record_as_dict["data"]

counter = 0

for raw_reading in raw_readings:

raw_reading["id"] = str(post_id) + str(counter)

raw_reading["account"] = account

producer.send("car_readings", raw_reading)

counter += 1

producer.flush()

# send the summary to the Kafka queue in case there is some stream processing required for that as well

raw_summary = record_as_dict["summary"]

raw_summary["id"] = str(post_id)

raw_summary["account"] = account

raw_summary["eventTime"] = record_as_dict["timestamp"]

producer.send("car_summaries", raw_summary)

producer.flush()

return jsonify({'title': str(size) + ' bytes received'}), 201

print(request.content_type)

if not request.json or not 'size' in request.json:

raise InvalidUsage('Invalid usage of this web-service detected', status_code=400)

size = int(request.json['size'])

decoded_compressed_record = request.json.get('data', "")

symmetricKeyEncrypted = request.json.get('key', "")

compressed_record = base64.b64decode(decoded_compressed_record)

encrypted_json_record_str = zlib.decompress(compressed_record)

pks1OAEPForDecryption = PKS1_OAEPCipher()

pks1OAEPForDecryption.readDecryptionKey('decryption.key')

symmetricKeyDecrypted = pks1OAEPForDecryption.decrypt(base64.b64decode(symmetricKeyEncrypted))

aesCipherForDecryption = AESCipher()

aesCipherForDecryption.setKey(symmetricKeyDecrypted)

json_record_str = aesCipherForDecryption.decrypt(encrypted_json_record_str)

record_as_dict = json.loads(json_record_str)

# Add the account ID to the reading here

record_as_dict["account"] = account

#print record_as_dict

post_id = mongo_collection.insert_one(record_as_dict).inserted_id

print('Saved as Id: %s' % post_id)

producer = KafkaProducer(bootstrap_servers=['your.kafka.server.com:9092'],

value_serializer=lambda m: json.dumps(m).encode('ascii'),

retries=5)

# send the individual records to the Kafka queue for stream processing

raw_readings = record_as_dict["data"]

counter = 0

for raw_reading in raw_readings:

raw_reading["id"] = str(post_id) + str(counter)

raw_reading["account"] = account

producer.send("car_readings", raw_reading)

counter += 1

producer.flush()

# send the summary to the Kafka queue in case there is some stream processing required for that as well

raw_summary = record_as_dict["summary"]

raw_summary["id"] = str(post_id)

raw_summary["account"] = account

raw_summary["eventTime"] = record_as_dict["timestamp"]

producer.send("car_summaries", raw_summary)

producer.flush()

return jsonify({'title': str(size) + ' bytes received'}), 201

I decided to use MongoDB as persistent storage for records and Kafka as the messaging server for streaming. The following tasks are done in order in this function:

- Check for invalid usage of this web-service, and raise an exception if illegal (Line 1 to 3). A simple test is done to check for the existence of 'size' in the payload to ensure this.

- Decompress the packet (Line 9 to 10)

- Decrypt the transmission key using the private key (decryption.key) stored on the server. (Line 12 to 14)

- Decrypt the data packet (Line 19)

- Convert the JSON record to an internal Python dictionary for digging deeper into it (Line 21)

- Save the record in MongoDB (Line 27)

- Push the same record into a Kafka messaging queue (Lines 27 to 50)

This functionality is exposed as web-service using a Flask server. You will find the rest of the server code in file flaskserver.py in folder 'server'.

I have covered the salient features to put this together, skipping the other obvious pieces which you can peruse yourself by cloning the entire repository.

Conclusion

I know this has been a long post, but I needed to cover a lot of things. And we have not even started working on the data-science part. You may have heard that a data scientist spends 90% of the time in preparing data. Well, this task is even bigger - we had to set up the hardware and software to generate raw real-time data and store it in real-time to even start thinking about data science. If you are curious to see a sample of the collected data, you can find it here.

But now that this work is done, and we have taken special care that the generated data is in a nicely formatted form, the rest of the task should be easier. You will find the data science related stuff in the third and final episode of this series.

Go to Part 3 of this series

The universal database for this purpose is the in-built SQLite database that comes with every Linux installation. It is a file-based database - which means one has to specify a file when instantiating this database. Make a clone of the repository at the '/opt' directory on your Raspberry Pi.

The universal database for this purpose is the in-built SQLite database that comes with every Linux installation. It is a file-based database - which means one has to specify a file when instantiating this database. Make a clone of the repository at the '/opt' directory on your Raspberry Pi.